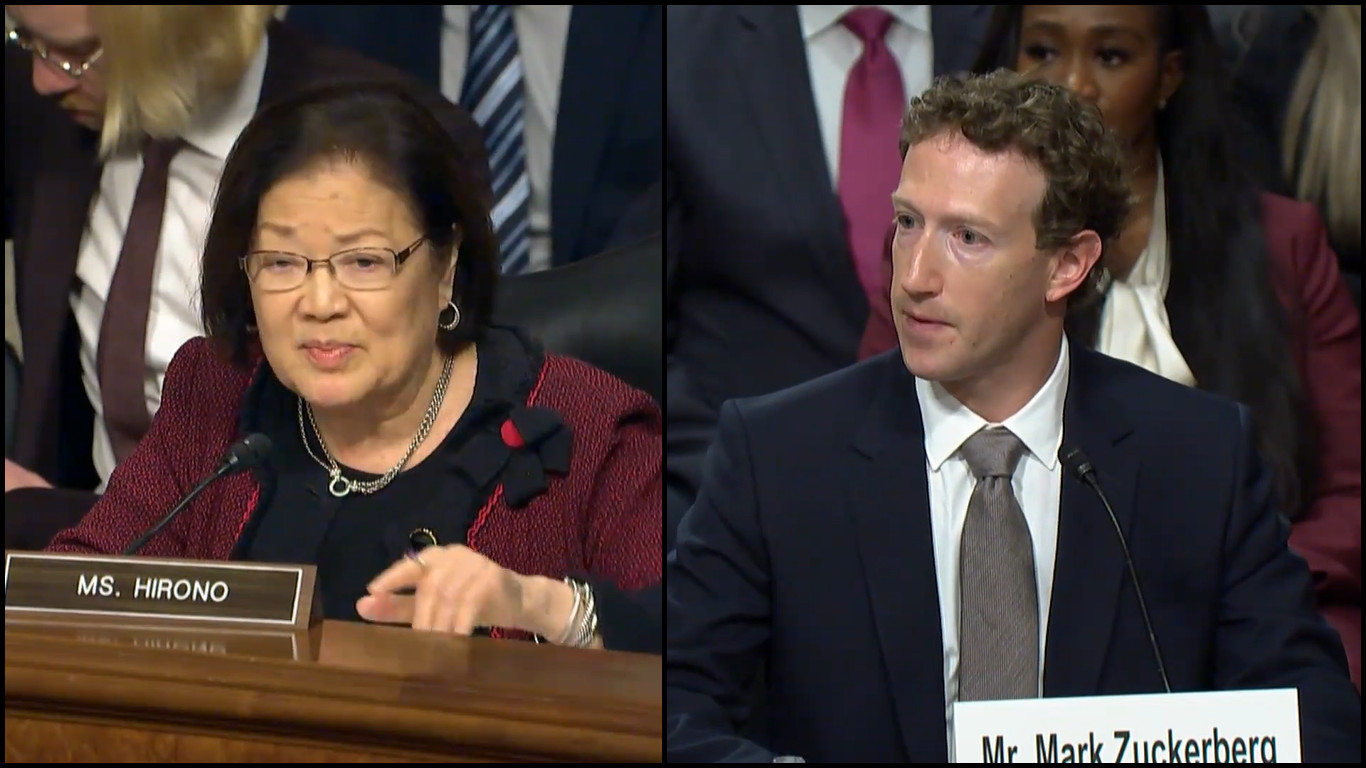

Video courtesy Sen. Mazie Hirono

(BIVN) – U.S. Senator Mazie Hirono (D, Hawaiʻi) on Wednesday questioned the top executives of major social media companies about child safety measures on their platforms.

Hirono was part of the U.S. Senate Judiciary Committee hearing, Big Tech and the Online Child Sexual Exploitation Crisis, held in Washington.

Participating in the hearing were chief executive officers Linda Yaccarino of X, Shou Chew of TikTok, Evan Spiegel of Snap, Jason Citron of Discord, and Mark Zuckerberg or Meta.

“Children face all sorts of dangers when they use social media,” Sen. Hirono said, “from mental health harms to sexual exploitation; even trafficking.”

Hirono shared her concerns about the creation and distribution of child sexual abuse material, or CSAM, using the online platforms. Sen. Hirono said:

“Sex trafficking is a serious problem in my home state of Hawaiʻi, especially for native Hawaiian victims. That social media platforms are being used to facilitate this trafficking, as well as the creation and distribution of CSAM is deeply concerning, but it’s happening.

For example, several years ago, a military police officer stationed in Hawaiʻi was sentenced to 15 years in prison for producing CSAM as part of his online exploitation of a minor female. He began communicating with this 12-year-old girl through Instagram. He then used Snapchat to send her sexually explicit photos and to solicit such photos from her. He later used these photos to blackmail her. And just last month, the FBI arrested a Neo-Nazi cult leader in Hawaiʻi who lured victims to his Discord server. He used that server to share images of extremely disturbing child sex sexual abuse material, interspersed with Neo-Nazi imagery. Members of his child exploitation and hate group are also present on Instagram, Snapchat, X, and TikTok. All of which they used to recruit potential members and victims. In many cases, including the ones I just mentioned, your companies played a role in helping law enforcement investigate these offenders. But by the time of the investigation, so much damage had already been done.

This hearing is about how to keep children safe online, and we’ve listened to all of your testimony to seemingly impressive safeguards for young users. You try to limit the time that they spend, you require parental consent, you have all of these tools. Yet, trafficking and exploitation of miners online and on your platforms continues to be rampant. Nearly all of your companies make your money through advertising; specifically, by selling the attention of your users. Your product is your users.”

Sen. Hirono later asked Zuckerberg if his company will “report the number of underage children on your platforms who experience unwanted CSAM, and other kinds of messaging, that harm them? Will you commit to citing those numbers to the SEC when you make your quarterly report?”

“I’m not sure it would make as much sense to include it in the SEC filing,” Zuckerberg answered, “but we file it publicly, so that way everyone can see this. And I’d be happy to follow up and talk about what specific metrics.”

“We don’t allow people under the age of 13 on our service,” Zuckerberg added, “so if we find anyone who’s under the age of 13, we remove them from our service. Now, I’m not saying that people don’t lie, and that there aren’t anyone who’s under the age of 13 who’s using it. But… we’re not going to be able to count how many people there are, because fundamentally, if we identify that someone is underage, we remove them from the service.”

Hirono also asked Zuckerberg why teens are able to opt out of Meta’s automatic safeguards, such as restrictive privacy and content sensitivity settings. “It’s not mandatory that they remain on these settings, they can opt out?” Hirono asked.

“Some teens want to be creators and want to have content that they share more broadly,” Zuckerberg answered, “and I don’t think that that’s something that should just blanketly be banned.”

by Big Island Video News12:01 pm

on at

STORY SUMMARY

WASHINGTON - The U.S. Senator from Hawaiʻi questioned the CEOs of five major social media platforms, including Facebook's Mark Zuckerberg, during a Senate Judiciary Committee hearing.